Do the two versions have the same name? Or to put it another way, how do I know whether I have the GPU version installed?

I downloaded and installed deep-art-effects-win-setup-1.2.5-gpu.exe, but when I run it Info/About says “Deep Art Effects Desktop client v1.2.5” - but there is no mention of it being GPU

Also when I run Start DAE - GPU it says "Error: CPU version of Deep Art Effects detected - please install GPU version.

I’ll have to either update or remove StartDAE…

Anyway - the only way to tell in DAE itself is to start it then click on File -> Preferences and see if the Use GPU box is checked (you can’t actually click it but its the only visual sign of GPU version)

DAE has never been too interested in the concept of usability, and I’ve moaned about this for like 18+ months (and get completely ignored on that particular subject).

To be fair they do listen to some of the suggestions - we’ve got the delete cache and lots of CLI additions.

I rally should go do the Review DAE on Trustpilot email they sent recently - they said be honest (well, they asked for it…)

I looked at that but it was greyed out so I wasn’t sure whether it applied.

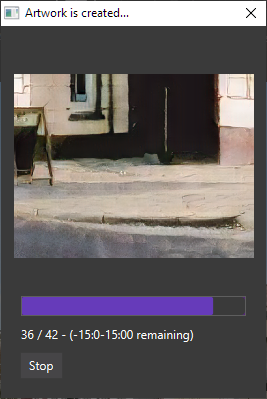

One suggestion would be to have it multi-threaded. As far as I can see it’s only using one of my 12 threads, and isn’t always making much use of the GPU. I currently have a situation where it’s been rendering for a long time and no progress seems to be made:

The numbers on the right just keep increasing. I’ve no idea what they signify. Maybe it has hung in some way, but it seems to be using CPU and little or no GPU.

On reflection, I think this is a significant bug. When I click “Stop”, it says “stopping …” but never does, and the only way to get out of it is by killing the java process in the taskmanager. So, it’s either a bug in java or in DAE.

If you check the log theres usually some kinda error listed. If GPU’s working now and you’re doing a large render then running out of GPU memory is quite easy as the AI eats memory in large quantities. A work-around is to use a smaller chunk size.

I don’t think the devs have read the chapter on clean exception handling in the manual

My crummy laptop’s only got 2Gb for the GPU so I was always running into issues, going for a CPU render - while slow - gets around that issue to an extent (as you always have more main memory than GPU memory)

An odd side effect of GPU I’ve noticed is that if you’re loading a large image to the GPU you can lose a lot of time while the image goes to the GPU - to be fair I noticed this mostly on my Jetson Nano (sort of a R Pi with a GPU and a whopping big heatsink)