Youtube blurb (video below) - this is expanging on some facts I mentioned in my post “Holy Cuda 10.0, Batman”

This is something I wrote ages ago

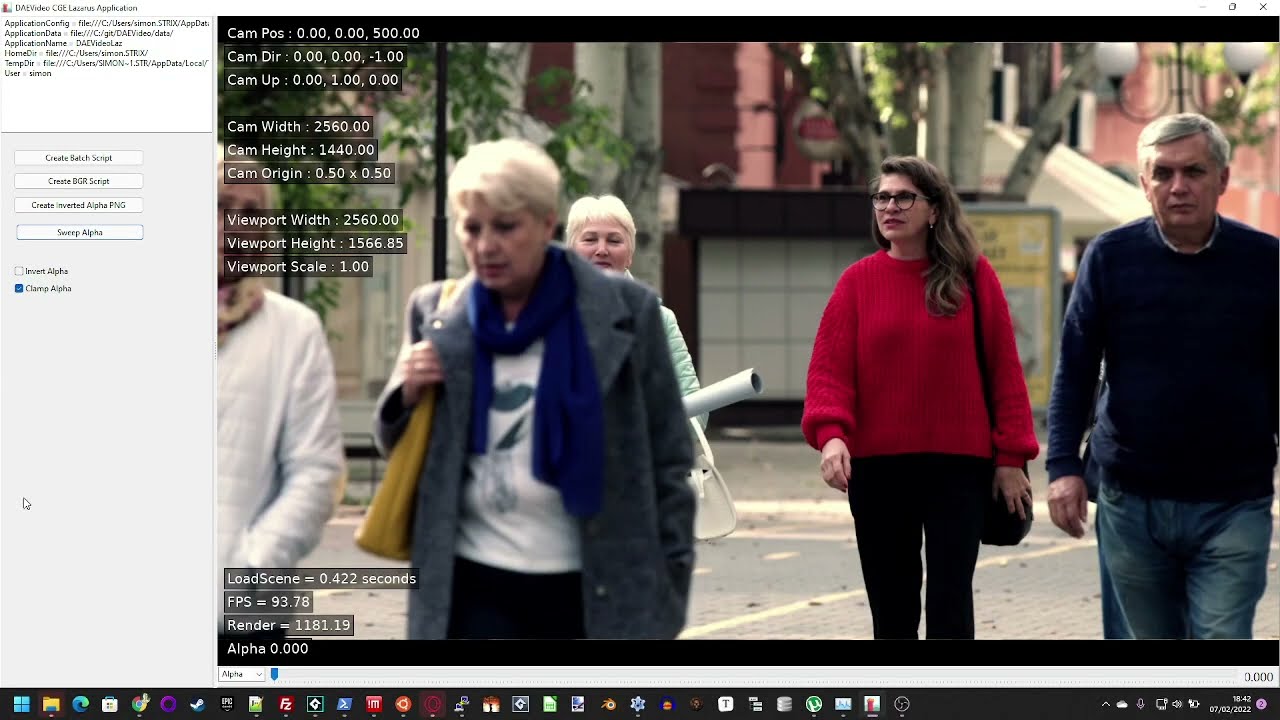

Deep Art Effects background removal actually creates a depth map, this video illustrates what happens if you use that information to control how much of the background is removed.

DAE uses the frame just after I click the Sweep Alpha button on my test rig. If you watch carefully you can see it rapidly (with this image) removes more and more background then slowing down to very slowly cut off more image until finally I remove all of the image.

DAE could offer this facility in their app (but it’d probably be confusing)

Also note that owing to the network DAE use being only trained in Humans this only works with people - stick a hose and a person in this thing and the horse is “Background”.

It is possible that DAE could expand on things it sees by adding more reckognisers - data sets exist for many things and animals.

The video (most of it is very boring and I didn’t bother editing it - it’s a quick + dirty thrown together job to show the point)