I know what format the models are as I love Sherlock Holmes however I noticed the styles are encrypted which is not a concern my problem is I would prefer calling my models inside DAE instead of a other programming language… to add to the batch reason why the desktop version does not close java.

So a add-on to make our own and share would be nice.

What was the model format again? I found the github but lost it again. Started with O as I remember.

1 Like

Well by the size of the file it’s ONNX 16 bit … but the headers have been changed on them… I use Netron to optimize models and the file structure was totally different. I totally understand as you wouldn’t want the models that you created as a business to be used elsewhere however a import with a patch feature or just being able to import would be a nice touch. For me generating a model with a crappy card takes 82h each however I can launch creation 4 times (Ya the PC is totally useless at that point) and end up with 4 new models at end of day 4. Which for me is not that bad 1 per day new add-on can’t complain I could use a remote PC or google labs to make more faster but it’s not a current rush.

Yep - that’s it

Reminder to self…

https://docs.openvino.ai/latest/notebooks/212-onnx-style-transfer-with-output.html

I sorta fancy trying out TF2 while waiting for DAE to get there

1 Like

I’m the same I keep trying new things seeing how far I can push things recently was testing the web version of onnx and boy does that crash my pc.

Well, I got the demo up OK locally

Problem is that it’s not using CUDA out of the box - Python - bloody awful langauge when it comes to stuff like this (everything wants diffo versions of everything)

Apparently this should work with CUDA but that requires yet another diffo version of Python (been thru 2 getting this far) and an extra library to do TF2-GPU with the option to compile TF2 yourself (the last bit is actually a good thing - you can tune TF to your specific GPU/CPU)

Most research is hosted on Linux for TF and building a decent TF2 for Windows can be a real problem (sorta explains the delay in TF2 for DAE)

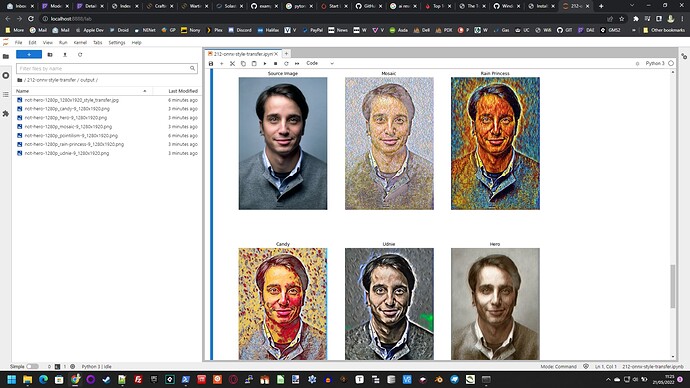

Anyway - this thing APPEARS to load the ONNX models OK

Ooh

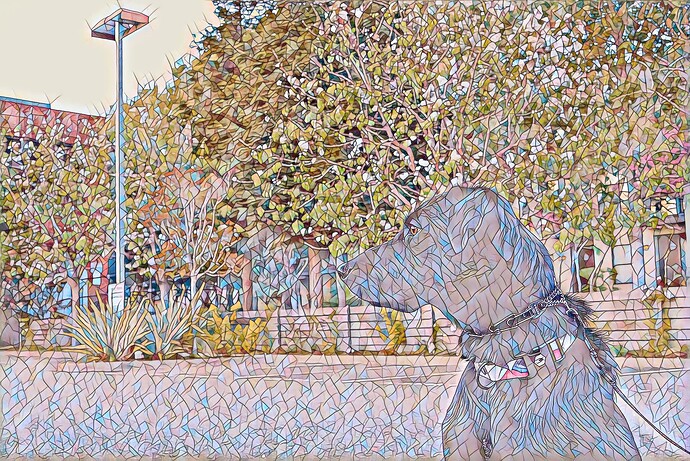

inpout image from demo

output image using Mosaic

Not fast (no GPU) but it works

1 Like

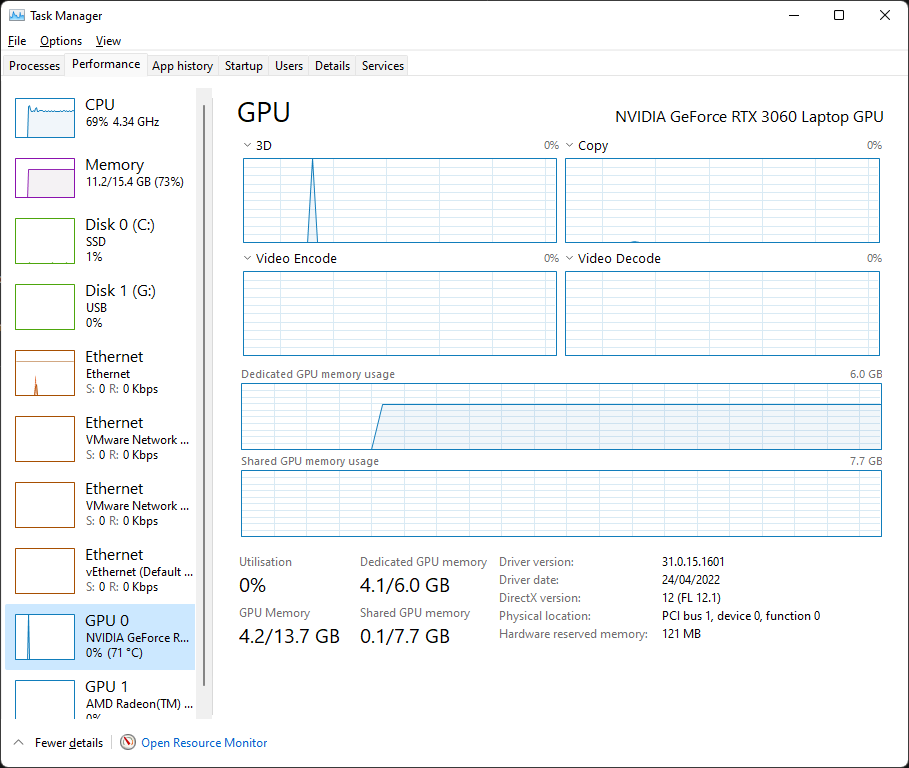

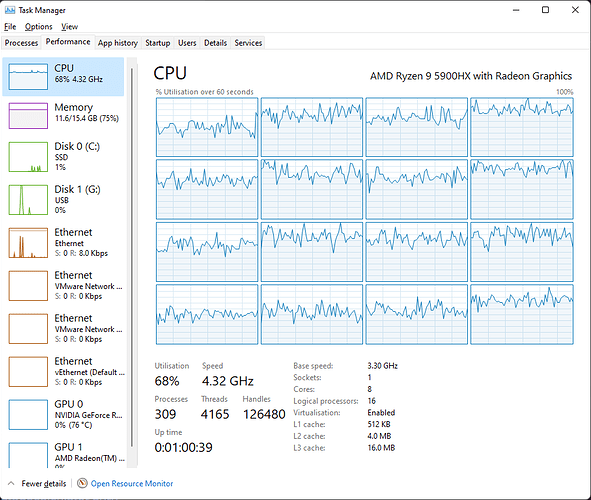

Ooh - my GPU is doing something…

(I’m trying to build a new style)

CPU’s having fun too

The aim here is to end up with an ONNX that can be used to style - a really nice thing would be the ability to add them to DAE if it works

Style creation time estimated at 2 hours - acceptable (RTX 3060 c/w Ryzen 7 5900HX Laptop)

1 Like

2h well damn that’s better than my 82h. =D

To add to the DAE you would need the file converted to the encoder format and added to styles.list (Only file that is crazy binary) the 2 cache files are just indexes readable with a hex viewer / even wordpad.

So for your wish to happen you need a dev to either add it for you or make a local add-on tool or have a new version that gives that feature. I figured out how sadistic the total files used in the coding by accident (I had exe setup as auto decompress in one of my tools) needless to say I truly understand the length of time required to make this to tensorflow 2 and they can take all the time they want / need…

Gpu not too bad but CPU when making models using the coco train pack I cannot run anything else.

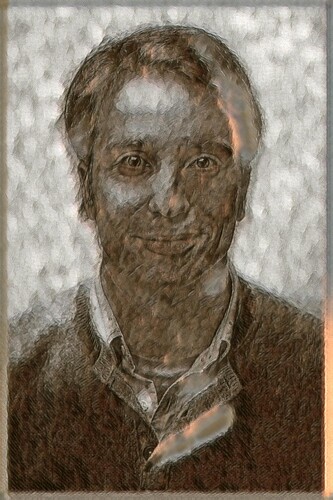

A (not so impressive) result - note this is not DAE but if they allowed ONNX user models it could be

Tomorrow I think I’ll try converting to ONNX…

Left = Original Style Image (Pinterest)

Right = Some bloke (Unsplash)

Middle = Styled (not using ONNX yet)

It shows promose - I’m re-doing the style with much more emphasis on the style image to see what that looks like - it’s almost finished (did the above hours ago)

Getting the python to do this was a pain in the ass - after 2 hours the thing had a bug in the save routine so I had to fix that then train the style again. One thing I discovered is that the same stuff used to do this also has a Java implementation so presumably DAE could use that as it’s TF link

I also tried using the Russian box (felt guilty doing that) - that was sooo slow compared to my 3060

1 Like

The key is experimenting with it… there is a better build online to do this I’ll find it in my links I had to redo my PC this morning (now currently 6:20ish pm) as I goofed on my registry of file extensions now that all is back to normal I’ll be able to find you the link with the better onnx web based builds including the image libraries required to make better models. Btw any file model bigger than 512x512 will work but you won’t have a magical feel to it you may end up with crisper results but in the long run it may or may not produce something out of this world.

Shawn

Experimenting can have bad results

Oops - a bit too much style in that one…

I’ve just started another using a style that’s 256x256 as the one’s I’ve tried so far were way bigger (1k x 1.4k = 20x pixels) - and it looks like that’s not affecting the runtime

If you want some pre-built models to experiment with I’ll stick mine somewhere if you like. ATM they’re in PyTorch format (so need converting to ONNX) - at least that’ll save you the 82 hours training a model. Applying the style is fast - it’s the creation that is slow…

Think I’ll drop DAE a line suggesting ONNX support with the view to letting us create our own Zoo - it’s a win-win situation as I see it

Something else I noticed was that my first attempt looks a lot like it’s just a Sepia tint - I ran a sepia tint over the original and that looks completely different (not nearly as good) so transfer is happening…

[Edit] I’ve scrapped the 256x256 - no speed diffo so bad comparison but I’ll try again once I’e got a nice model so I can see what difference it makes using a smaller style-image (if any) - filesize is currently 6.5M per model… Using coco 2014 for training - two passes - so 165k trains in total. I’ll start next runs overnight (midnight here)

1 Like

Try with pictures of nature or animals for way better results (I noticed on people it just doesn’t work great)

Well good luck with the trains and best of luck with the new results have a great week-end.

Shawn

Hmm - from a quick look at Coco it seems there aren’t a LOT of humans (just some). This makes me wonder what would happen if you trained against people?

Anyway - I’ve got three variations on my test model ready to run overnight as I wanna see what happens with those before I try diffo subjects.

My first go WAS going to be starry night - but everyone does that so…

I also wanna try some portraits with colour in them…

1 Like

Indeed coco doesn’t have much humans I’ll find the github that has the different tables of training. Right now I’d love to get my hands on the original vgg19 collection but I lack the storage space and would require a academic login.

Shawn

P.S: Can’t wait to see the results of your tests.

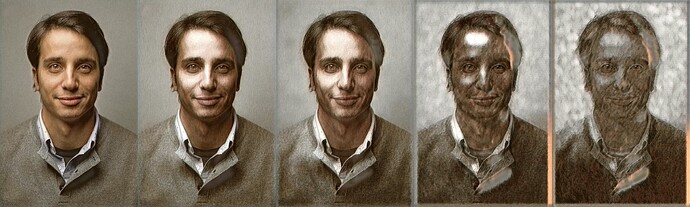

Here are the five results lined up next to each other for comparison - the ones shown above are at either end and the new ones in between making for easy comparison.

The order they’re in is increasing style weight - 1e10, 5e10, 1e11, 5e11 and 1e12 - Personally I like the middle one best. Next model(s) I wanna try some half-way between the weights used to get more idea of the way things chang (that’s 8 hours+ in runtime though so overnight again…). A problem with properly researching what happens is the time it takes to make models…

When it comes to games I love RPGs and they always have a character portrait. My initial reason for playing with DAE was the desire to find some way to personalise the player portraits in games that allow changing them.

I also wanna do ONNX conversion today as this way I can alter the python to directly output the more useful file format.

ONNX works (it’s the one marked Hero - bottom right)

1 Like

Great job… now you’ve gotten yourself a new toy to play with. Results are not too shabby.

Shawn

Playing with “New Toy”

Source style image = Sketch 1 thumbnail

Well at least that gave you better results…

Not surprised that the 1st and last are the same get a copy of Netron

As model files (Except for DAE as they are encoded) are readable.

I’ll have more sites and tools to share probably by monday…

Shawn